The human brain is a wondrous instrument. It starts out as a blank data processing device that is wired to five data collection channels — our senses. From the minute we are born, our senses kick into operation, collecting information about our environment and feeding it to the brain.

One can’t really imagine what it is like receiving such a deluge of data with no starting point to work with.

Most likely the first image to be captured by the eyes of a newborn baby would be its mother’s face. But then how does its brain make sense of a face when it does not have any prior concept of an eye, a nose, a mouth, much less the entire package these elements comprise — said face?

Most likely the first image to be captured by the eyes of a newborn baby would be its mother’s face. But then how does its brain make sense of a face when it does not have any prior concept of an eye, a nose, a mouth, much less the entire package these elements comprise — said face?

| SUPPORT INDEPENDENT SOCIAL COMMENTARY! Subscribe to our Substack community GRP Insider to receive by email our in-depth free weekly newsletter. Opt into a paid subscription and you'll get premium insider briefs and insights from us. Subscribe to our Substack newsletter, GRP Insider! Learn more |

We all know how this story pans out eventually. By the the toddler years, most humans will have developed from this blank slate state into an exquisitely-tuned instrument of facial recognition — able to distinguish individual people by even the subtlest of differences in facial features within milliseconds of a glance. Of course being something that we do within mere milliseconds, it is a skill we utterly take for granted — until we realise that even the most powerful machines built by the finest engineers (motivated by anti-terrorist money entire governments are willing to spend) still can’t do it as well as the average three-year-old.

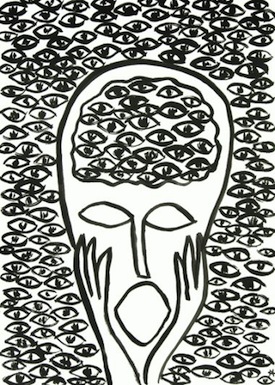

Like the rest of our plethora of impulses that collectively make up our mind — the thingy that makes us who we are — our ability to recognise faces is not an engineered ability. Nothing conciously programmed our brains with any rules or algorithms to govern how we tell one another apart just by looking at them. It is an ability painstakingly built from the ground up by our brain by piecing together every bit of data streamed into it by one sense, relating and associating these to other bits of data streamed from other senses, and assigning symbollic meaning to each. Each symbol goes on to form ever more complex hierarchies and inter-relations of symbols — pupils plus irises to form eyes, eyes forming a pair, then associated with a nose, a mouth, and all being packaged together to form a concept of a face, and so on and so forth. As the complexity and compoundness of symbols reach a certain critical mass, we then start to assign swaths of meaning across — and criss-crossing — symbols. “Mother” to a particular face, “kuya” to another.

As our minds develop more and more complex and compound symbols and assign meanings to them and meanings upon those meanings, we start to lose sight of the lower level elements that compose these increasing abstractions.

It’s kind of like how we consider a ballbearing to be spherical even if microscopic irregularities at its surface cause its radius to vary along said surface.

So while some of us may be busy enjoying an episode of Wowowee on TV, our dogs may sit beside us baffled as to why their masters consistently derive pleasure out of staring at a screen made up of a matrix of randomly blinking coloured dots. Our ability to perceive the gyrating girlettes on screen is made possible by our mind’s ability to turn data encoded in those blinking coloured dots and raise them to a higher level of abstraction that is simply beyond the reach of the mind of a dog.

[Douglas R. Hofstadter in his book I Am a Strange Loop explains in detail.]

The brain is basically a massively complex device for simplifying data by systematically building higher level abstractions.

We see the pair of eyes, nose, and mouth, and right away we think “face!”. We perceive the elements, and our minds connect the dots (where it perceives the existence of said connections) and turn them into a higher-level concept.

It also does the same with events. As events stream into our consciousness, the mind draws upon its episodic memory to create higher-level stories or narratives that serve as proxies to help us explain stuff to ourselves. Stories and narratives are the higher-level abstractions of events in the same way that compound symbols and complex concepts are higher-level abstractions of data bits and simple symbols. As we mature as a sentient organism we progressively dwell more on ever higher levels of meaning as our brain continues its lifelong effort of synthesizing and simplifying. It is our way of making sense of the things around us and the events that we experience in a way that spares us of having to individually track the vast number of things going on around us.

Unfortunately our minds sometimes form the wrong connections or tell the wrong story from the data it perceives.

It comes up with a cause-and-effect relationship where none exists.

It’s kind of like how, just shortly after the death of a loved one, every perturbation in our eyesight, every wistful breeze we feel, or a whiff of something that reminds us of a childhood ambience is made out to be some kind of supernatural “presence” — “Nagparamdam ang yumaon” (“the deceased is making its presence felt”) as the Old Farts would say in the vernacular.

The brilliant thinker Nassim Taleb calls this the narrative fallacy. The venerable George Lucas for his part prefers a far cooler term: the Jedi Mind Trick.

Whatever we choose to call it, organised religion, cults, con artists, advertisers, and politicians have so brilliantly — and profitably — exploited this flaw in human thinking architecture since time immemorial. Tragically it is the weak- and small-minded that are most vulnerable to its effects.

benign0 is the Webmaster of GetRealPhilippines.com.

I wouldn’t say that the weak and small minded are the most vulnerable to this. I think that all of us are manipulated in one or more ways by this exploitation of the human thinking process. Optical illusions are one concrete and simple way to prove that our minds can be easily exploited or should I say deceived.

think about star wars. you have the death star plans first seen in the possession of vader’s daughter, passed to a droid vader once owned, then next seen with vader’s son and vader’s teacher. then his son blew up the death star and vader conveniently is one of the few people to survive

if you didnt know the star wars mythos, you would not be faulted if your brain created its own story, that vader was behind the destruction of the death star.

The Human Brain is a very complex machine…it is like any muscle in your body. The more you use it; the more it grows stronger.

Unfortunately most of the Filipino political leaders are thick skulled; with small brains. They rarely even use it. If they use it: it is to con people; scam people and lie to people…

I just learned from “neuro researcher” that in the neurons of our body…there is a god neuron. And in our DNA, 95% were useless…the 5% are start up DNA, that made us thinking human being…who put this start up DNA in us?…

Some believe we actually start “getting information” as early as being in the womb, as soon as the ears are fully developed in the last trimester. The thing is, the brain is not the only thing that stores “memories.” Our bodies neural network store small bits of information that relieves the brain of menial chores, lessening its load so it can perform at its peak when needed. “Muscle-memory” and “hand-eye coordination” are things science has long proven, enabling an athlete to play at peak performance without having to use his brain so much, overriding the brain’s normal function to process input and output.